Use airflow to author workflows as directed acyclic graphs (DAGs) of tasks. Here is a sample of your required option from bitnami/airflow # bitnami airflow helm values. Airflow is a platform to programmatically author, schedule, and monitor workflows. Note: I recommend shifting from this to any other maintained implementation of airflow for production workloads, as it is archived now and will no longer be patched. Apache Airflow uses a git-sync container to keep its collection of DAGs in synch with the content of the GitHub Repository and the SSH key is used to.

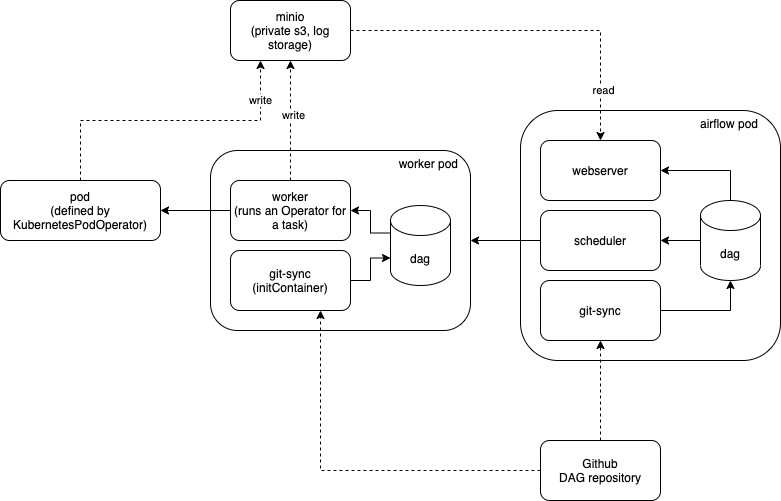

Principles Scalable Airflow has a modular architecture and uses a message queue to orchestrate an arbitrary number of workers. Hence one solution can be to utilize dags-path to point to the sub-directory in repository. Listing 18.6 Configuring a Git-sync sidecar with the Airflow Helm chart helm upgrade airflow. Airflow is a platform created by the community to programmatically author, schedule and monitor workflows. As a new feature of git for partial clone git-sparse-checkout is still experimental. Seems like this implementation does not support git subpath, plus if you look behind subpath method, there is a git clone followed by the directory filtration. I wonder if I can let airflow only pick up zipped dags in a specific folder such as dags-dev in a git branch, not all the zipped dags?Īirflow git sync configuration looks like this: AIRFLOW_KUBERNETES_DAGS_VOLUME_SUBPATH: repo # must match AIRFLOW_KUBERNETES_GIT_SUBPATHĪIRFLOW_KUBERNETES_GIT_DAGS_FOLDER_MOUNT_POINT: /opt/airflow/dagsĪIRFLOW_KUBERNETES_GIT_SYNC_CONTAINER_REPOSITORY: :4567/eng/external-images//git-syncĪIRFLOW_KUBERNETES_GIT_SYNC_CONTAINER_TAG: v3.1.1 airflow github-actions Share Improve this question Follow asked at 8:27 OS95 25 4 Its not at all clear to me what you mean by 'push the files to the dags' (which files to which dags), but note that git push does not push files, it pushes commits. The Parameters reference section lists the parameters that can be configured during installation. Kubernetes is described on its website as: Kubernetes (K8s) is an open-source system for automating deployment, scaling, and management of containerized applications. To initiate your Airflow Github Integration, follow the steps below: Step 1: Select Home > Cluster. The command deploys Airflow on the Kubernetes cluster in the default configuration. It is a requirement for all ASF projects that they can be installed using official sources released via Official Apache Downloads. Airflow is a platform created by the community to programmatically author, schedule and monitor workflows. We use airflow helm charts to deploy airflow. Apache Airflow is one of the projects that belong to the Apache Software Foundation. My company uses git-sync to sync zipped dags to airflow.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed